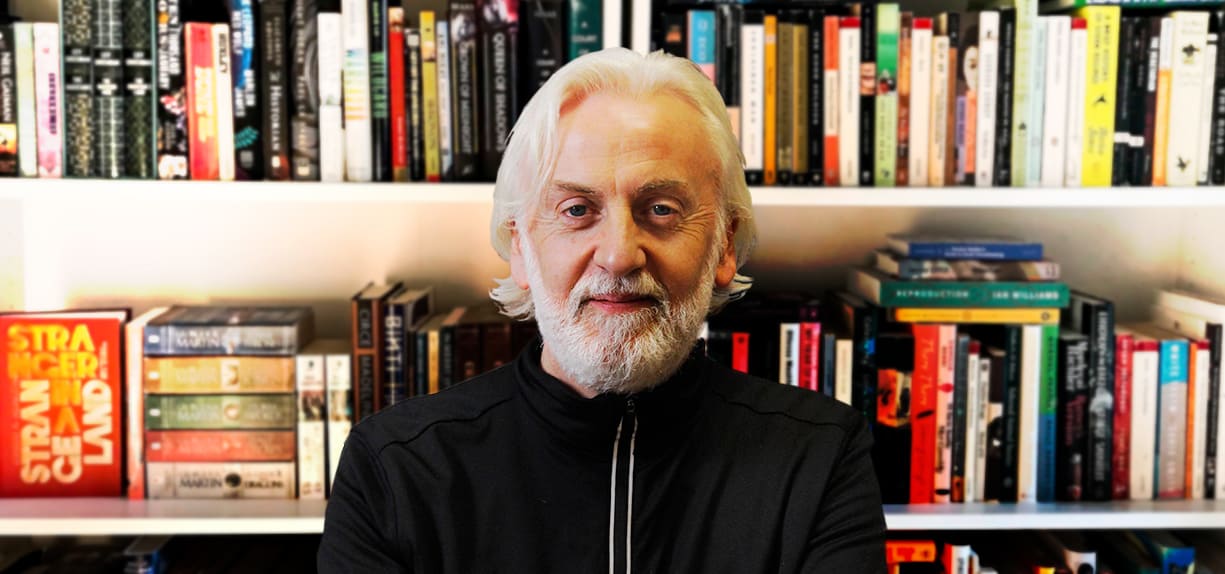

Pictured: Dr. Robbie Smyth, Head of the Faculty of Journalism and Media Communications, and Member Of The Management Board, Griffith College, Dublin

By Dr. Robbie Smyth, Head of the Faculty of Journalism and Media Communications, and Member Of The Management Board, Griffith College, Dublin.

We have arrived at a critical juncture in the development of internet orientated technologies and the resultant infinite number of applications and uses this technology provides. All of the key stakeholders accept that there must be change, positive change in how the internet and particularly its social media platforms are regulated, whether they are lobby and interest groups, governments or the tech giants themselves. They all acknowledge we cannot continue on this current negative trajectory.

In 2022, this is the key communications industry regulation issue, and to resolve it, everything must be on the table. This includes questions like:

- What limits if any should there be on freedom of speech online?

- Who gets to determine this? Is it a national or transnational question?

- Who will police and monitor these decisions? Will it be the platforms, national statutory agencies, or the transnational organisations, super regulators spun out of entities like the EU?

- How should platforms and governments respond to disinformation online?

- How do we as a society in Ireland, or transnationally in the EU, deal with existential, extranational threats originating online?

- Where do we begin to tackle the questions of algorithmic accountability and transparency thrown up by our dependence on the internet for not only communication and social interaction but as a marketplace where firms small and large do business minute by minute?

Across the world these questions are being asked and answered in different ways. The tech platforms such as Alphabet, Amazon, Apple, Meta and Microsoft want to protect their profitability and a phase of continued growth and market dominance, unprecedented in modern economies, and only paralleled in history by firms such as the East India Company or Standard Oil in their respective epochs.

Governments want to protect and fortify their power to legislate and govern and do not want an online society weaponised to undermine this. Users want to know that not only is it safe to go online, but that they can assess with accuracy the provenance and veracity of the information presented on their screens. This applies whether this is Facebook news feeds, Instagram reels, or any of the myriad of information sources that find their way onto Twitter, YouTube or even the over-shared strange and dubious memes that are a guilty pleasure for too many of us.

The Media Commission announced in Ireland last week as part of the Online Media Regulation Bill is one small welcome step, but its budget and scope must be geared towards the reality of the scale of the regulation challenge facing us.

Governments want to protect and fortify their power to legislate and govern and do not want an online society weaponised to undermine this.

An aspect of the actual challenge to the fledgling Media Commission’s work is that Ireland is emerging as a new form of international digital hub, this time as a centre for the online Trust and Safety sector.

Thousands of people, based in Ireland, are working on often long demanding shifts to keep the internet safe; their numbers are increasing and could double over the next decade. They are on the front line of an ongoing clash of online disinformation and deviance where bad actors systemically attempt to undermine the ideal of the internet as an open, accessible and dependable global information and communication source.

In Griffith, we have developed a new Postgraduate Diploma in Trust, Safety and Content Moderation Management. It is an interdisciplinary course with modules designed by lecturers from our Media and Computing Faculties and Graduate Business School, along with key inputs and advice from companies like Kinzen, a leader in strategies to combat online disinformation.

The programme’s aim is to enhance the skills of these frontline moderation workers. This will be achieved through developing competencies in project management and communications, while deepening understanding of the software technologies deployed across this sector and the widening academic study of the internet’s sociological impacts, the resulting issues of societal disruption and ultimately the questions of online ethics and regulation. There is also a key module on self-caring practice in the workplace.

Regulatory strategies work best when everyone at the table is able to make their inputs heard. So, as we consider the rollout of the EU’s Digital Services Act, and what responses there are across the USA at state and federal level, and from within the tech platforms themselves, that is incumbent on all the players, but particularly government and its agencies, to ensure that all parties are given an input into the transforming regulatory environment. This should include those on the trust and safety frontline to the end users making reels, videos or just another overshared meme. Maybe we need a citizens assembly on internet regulation!